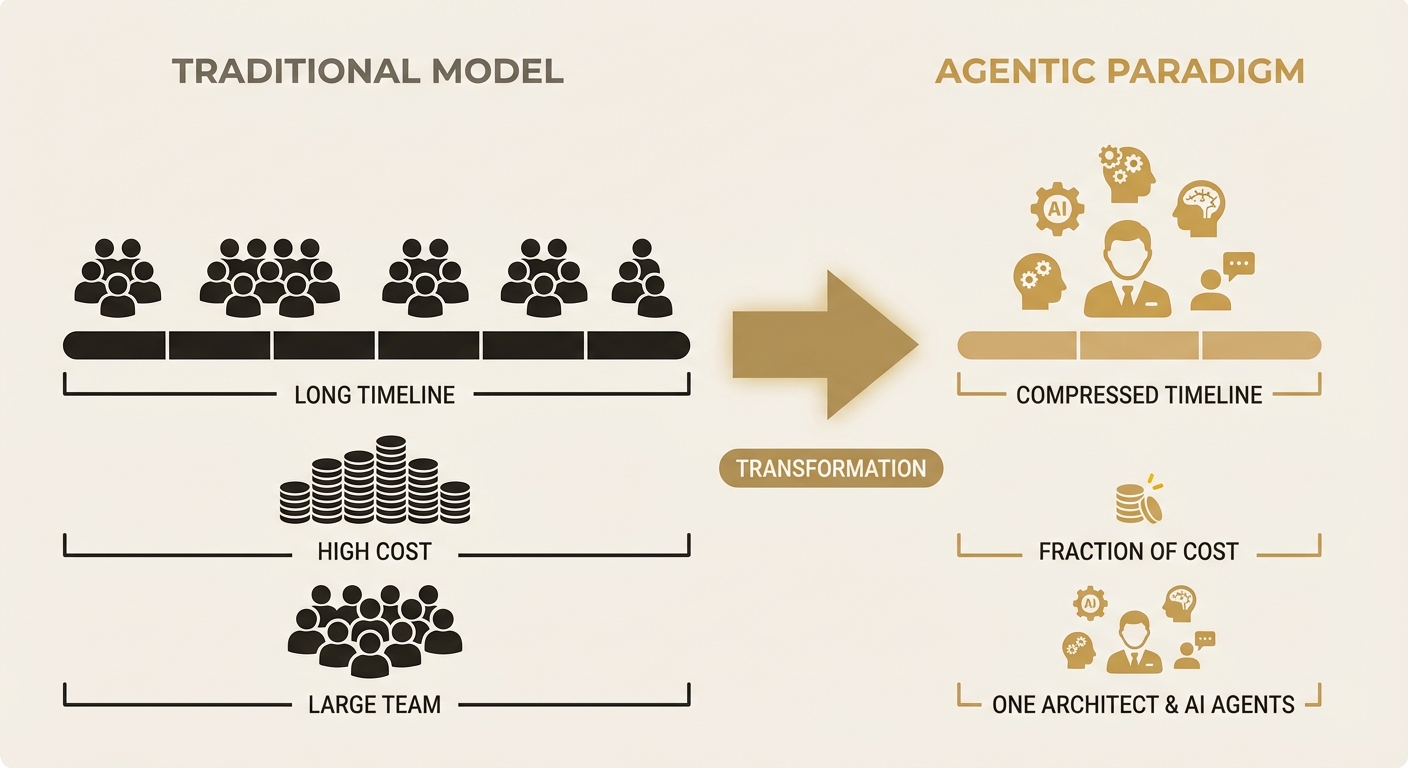

Agentic development changes the economics

The economics of custom software development have shifted. AI-assisted development patterns are compressing delivery timelines from months to weeks while maintaining production-grade quality. This piece looks at why traditional consultancy pricing no longer reflects the actual cost of delivery, and what that means for organisations that have been priced out of bespoke solutions.

The traditional consultancy cost structure

Enterprise software projects have historically followed a predictable cost pattern: a $500K-$2M engagement spanning 6-18 months, staffed by a team of 5-15 consultants billing $250-$600/hour. The deliverable is typically a custom platform, integration layer, or analytics system built on established enterprise frameworks.

That pricing reflected assumptions that were accurate until recently: custom software requires large teams, complexity scales linearly with features, testing and deployment are manual bottlenecks, and the available tooling requires significant boilerplate before anything useful gets built.

Every one of those assumptions has been invalidated by the current generation of development tools. And the pace of change is accelerating.

What changed: the Anthropic cadence

Anthropic is shipping enterprise-grade features for Claude on a weekly cadence. In the past 12 months alone: extended thinking (complex multi-step reasoning), tool use (structured function calling), computer use (UI interaction), the Model Context Protocol (standardised tool integration), and Claude Code (an AI development environment that writes, tests, and deploys code).

Any one of these would have taken a traditional development team months to build from scratch. Together, they represent a platform shift on the scale of cloud computing or mobile frameworks, except it's playing out in months rather than years.

And this isn't specific to Anthropic. The Model Context Protocol is an open standard. The architectural patterns work across providers. If Anthropic doubles its pricing tomorrow, you swap the model and keep the architecture. That model-agnostic foundation is a deliberate design choice, not an accident.

"We're building on a platform that didn't exist 12 months ago. The question isn't whether AI-assisted development works. It's whether you're using it before your competitors do."

— Token Theory

Agentic development in practice

To be clear: agentic development is not "AI writes all the code." It's a pattern where a human architect makes design decisions, defines system boundaries, and validates outputs, while AI agents handle the high-volume implementation work. Boilerplate, test suites, UI components, database migrations, integration debugging.

In practice, this consistently delivers 3-5x compression in delivery timelines. A project that would have taken 12 weeks with a traditional team of four can be delivered in 3-4 weeks with one senior architect and AI development agents. The quality is equivalent or better:

- Test coverage is higher because AI agents generate comprehensive test suites by default, not as an afterthought

- Code consistency improves because a single architect maintains the full mental model rather than coordinating across four developers

- Iteration cycles shorten because changes that previously required sprint planning and a two-week cycle can be tested and deployed in hours

- Documentation is generated alongside code rather than as a post-delivery obligation

The pricing disconnect

This is where the economics get uncomfortable. If a project that previously cost $500K can now be delivered for $75K-$150K, what are clients paying for in the traditional model?

Mostly organisational overhead. Account managers, project managers, junior developers ramping up on the codebase, meetings about meetings, the change request process. None of this is inherently wasteful. It evolved to manage risk when development was slow and expensive. But when the underlying cost of delivery drops by 70%, the overhead doesn't, and the client is subsidising a cost structure designed for a different era.

Who this unlocks

The biggest impact isn't on enterprises already spending $500K on custom builds. They'll get lower costs and faster delivery. But the real shift is for organisations that were never in the market at all.

Mid-market companies with $5M-$50M revenue. Non-profits running on $500K-$5M budgets. Australian government departments with tight procurement cycles. These organisations have always needed custom tools. They just couldn't afford the traditional consulting model. At $75K-$150K with 4-8 week delivery, bespoke AI-powered tools move from impossible to budgetable.

This is the market Token Theory was built to serve. Not by cutting quality, but by using a development paradigm that makes serious tools accessible to organisations that were previously priced out.

The counter-arguments

The obvious objection is that faster must mean worse. It's a reasonable concern, but it doesn't hold up. The compression comes from eliminating categories of work that used to be necessary but aren't anymore. Writing boilerplate: eliminated. Manual database migrations: automated. Building component libraries from scratch: unnecessary. Writing test suites after the fact: generated alongside code. Managing handoffs between developers: eliminated when one architect holds the full context.

The quality floor has moved up. A solo architect with AI tooling produces more consistent, better-tested output than a team of four junior-to-mid developers coordinating over Jira tickets. The bottleneck has shifted from "how many people can we staff" to "how good is the architect's judgement."

There's a harder question around key-person risk, and it's worth being honest about. If one architect holds the full context, what happens if they're unavailable? In practice, this is less of a problem than it sounds. Because AI agents generate standardised, well-documented, comprehensively tested code, handover is actually easier than untangling a legacy system built by a fragmented team of juniors. The codebase is consistent. The tests are thorough. The documentation exists. That said, we build every project with explicit handover documentation as a deliverable, not an afterthought.

There's also the question of ongoing maintenance. Building the thing is 70% cheaper, but who maintains it? This depends on the client. Some have internal teams who take ownership. Others engage us on a retainer. The important thing is that a well-structured, well-tested codebase built by one architect is significantly easier to maintain than a sprawling system built by a rotating team of contractors.

Further reading

- McKinsey Global Institute, "The Economic Potential of Generative AI", June 2023 — quantifies productivity multipliers across software development tasks

- GitHub, "Research: Quantifying GitHub Copilot's Impact on Developer Productivity and Happiness", 2022 — 55% faster task completion in controlled study

- Anthropic, "Claude for Enterprise" (anthropic.com) — current capabilities including Claude Code, tool use, and MCP

- Sequoia Capital, "Generative AI's Act Two", 2024 — analysis of how AI-native development changes cost structures

- Thoughtworks Technology Radar — ongoing tracking of AI-assisted development practices in enterprise contexts

Related articles

The context window is the new database

GPT-5 won't fix your hallucination problem. Neither will Claude Opus. The models are already good enough. The bottleneck is what you feed them. When fragmented, poorly structured data gets shoved into context windows with no architecture, you get confident-sounding nonsense. The fix isn't a better model. It's better data.

Read more PositionRAG and agentic search both have a context problem

Standard RAG retrieves snippets that often cut off mid-thought, losing the surrounding context that gives information its meaning. Agentic search swings the other way and floods the context window with everything it can find. Neither approach solves the fundamental issue: you need a structured context layer that delivers the right information, at the right depth, without the noise.

Read moreInterested in working together?

Let's discuss what's possible for your organisation.

hello@tokentheory.ai